Intermediate Layer Optimization for Inverse Problems using Deep Generative Models

Intro

Given a corrupted or noisy image how can we improve resolution and obtain faster imaging reconstruction algorithms? Modern deep generative models like GANs have demonstrated excellent performance in representing high-dimensional distributions and therefore “creating” lifelike images. In this project, we show how deep generative models can be used to solve more advanced image reconstruction problems like denoising, completing missing data or inpainting, and recovery from linear projections. The key is to model this as an inverse problem.

Our work relates to a core foundational IFML theme, namely learning structure in real-world data. A classical model of structure in real-world data has been sparsity. Here we go beyond sparsity to a more general notion that natural images are in the range of a generative model (usually a deep neural network). Our work establishes provable sample complexity bounds and approximation guarantees for inverse problems using these models and inspires a number of heuristics that give state-of-the-art performance in practice. In particular, we can apply these techniques to one of our core use-inspired themes: imaging (we give several examples below).

Fundamental algorithmic innovation: Intermediate layer optimization

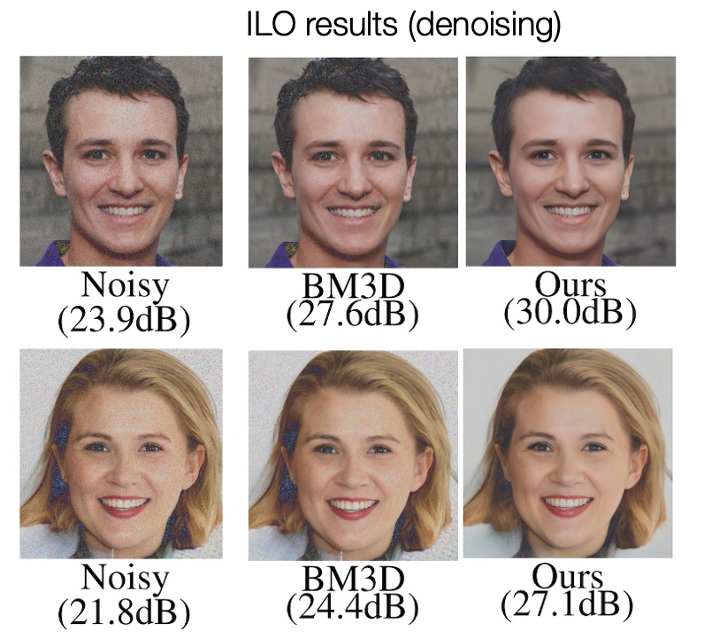

We propose Intermediate Layer Optimization (ILO), a novel optimization algorithm for solving inverse problems leveraging deep generative priors. Instead of optimizing only over the initial latent code, we progressively change the input layer obtaining successively more expressive generators. To explore the higher dimensional spaces, our method searches for latent codes that lie within a small l1 ball around the manifold induced by the previous layer. Our theoretical analysis shows that by keeping the radius of the ball relatively small, we can improve the established error bound for compressed sensing with deep generative models. We empirically show that our approach outperforms state-of-the-art methods introduced in StyleGAN2 and PULSE for a wide range of inverse problems including inpainting, denoising, super-resolution and compressed sensing.

Results

Our algorithm can be directly applied to any inverse problem with a differentiable forward operator. This includes denoising, super-resolution, inpainting and compressed sensing with linear projections. Our experiments demonstrate excellent performance across this wide range of applications.

Future Work

Our future and continuing work includes applications to medical imaging and specifically MRI acceleration and super-resolution (joint with the Dell Medical School).

Acknowledgements: This research has been supported by the NSF AI Institute for Foundations of Machine Learning (IFML), NSF Grants CCF 1934932, AF 1901292, 2008710, 2019844 research gifts by Western Digital, computing resources from TACC and the Archie Straiton Fellowship.